Nike SNKRS Explorations

We worked with Nike's s23NYC team to experiment with new mobile technologies and explore unique in-app interactions that could be used to build hype around product releases.

My main role was to build functional prototypes for both the web and native devices to demonstrate their capabilities and bring our designers’ ideas to life, but I also contributed heavily to the early stages of concept generation. Not every project I worked on made it to production. Some remained purely vision work.

Our explorations lead me to explore a breadth of technologies including ARKit, Metal Shading Language, ThreeJS and Spark AR. The focus on AR was due in large part to Apple releasing the first version of ARKit around that time, which generated a lot of interest in related technologies. Many of the non-AR experiments used the sensors of mobile devices (like the gyroscope) in novel ways to surprise users with hidden interactions.

Unlocking Sneakers Through Easter Eggs

One project had us looking for ways to hide interactions throughout the SNKRS app that, when discovered, could grant the user access to limited sneaker releases. We drew inspiration from a mix of sneaker and sports cultures as well as inventive and unexpected uses of iOS APIs (the unequalled mobile game Black Box was a big inspiration). In concept, each idea could serve as a mini viral experience as word would spread organically through social media.

A few of the standout concepts were…

Crash the Glass

Inspired by the iconic visual of backboard shattering, users would shake their phone, “shattering” their screen and revealing a hidden image underneath.

Frosting

By leaving their phone idle and stationary for an extended period of time, the screen would appear to frost over. Once the frosting was complete, you would be able to “wipe away” the frost with your finger to reveal a sneaker underneath, but if you were impatient and picked up your phone too early the image would reset and you would have to start over.

High Heat

In this concept, you would simply rub an image of a sneaker as quickly as you could for a prolonged period of time, creating friction and heat. As fun as the idea was, it actually did produce an uncomfortable amount of heat friction and felt like you were sanding your fingertips off (this is why we prototype…they can’t all be winners).

Nike City

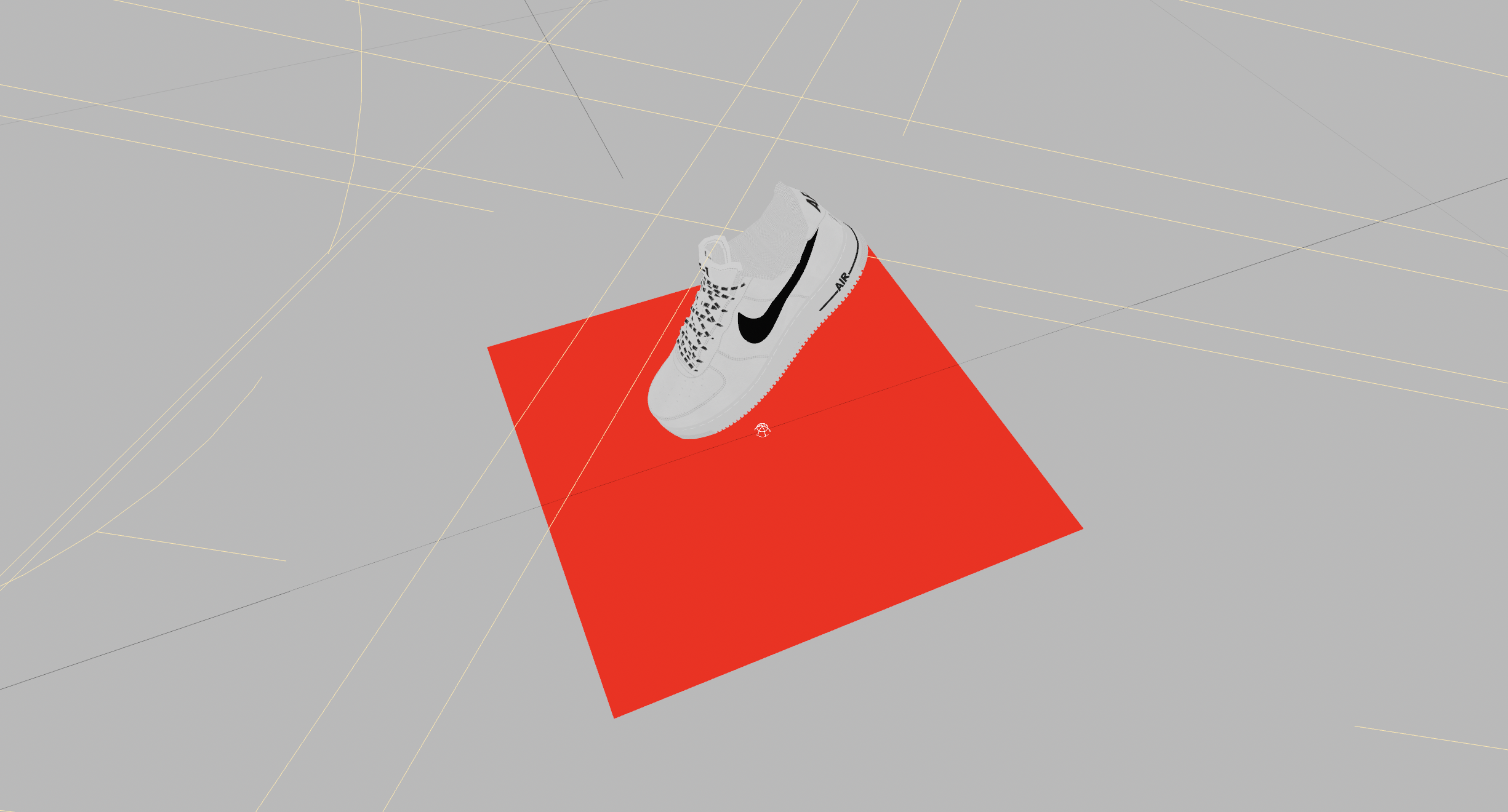

This idea centered around a collaborative AR experience in which players on-location in a city would be searching for a hidden, virtual sneaker drop, while players at home could view an AR map in their living room and follow along or help other players.

To simulate on-location gameplay I built a prototype that let us place a virtual sneaker in Washington Square Park that would become hidden when you walked away and reappear as you got closer. We used this to film examples footage of us “discovering” a sneaker.

In another prototype, I mocked up the at-home play experience by projecting a city onto the group with fake player tokens in it. Holding your phone up, you could walk through the city and see how player tokens interacted with the camera. We used billboarded images to make them always face the user. When you approached, we used your proximity to expand their token into a mini-profile and lock the size in the viewport to make it easier to interact with them.

AR Posters

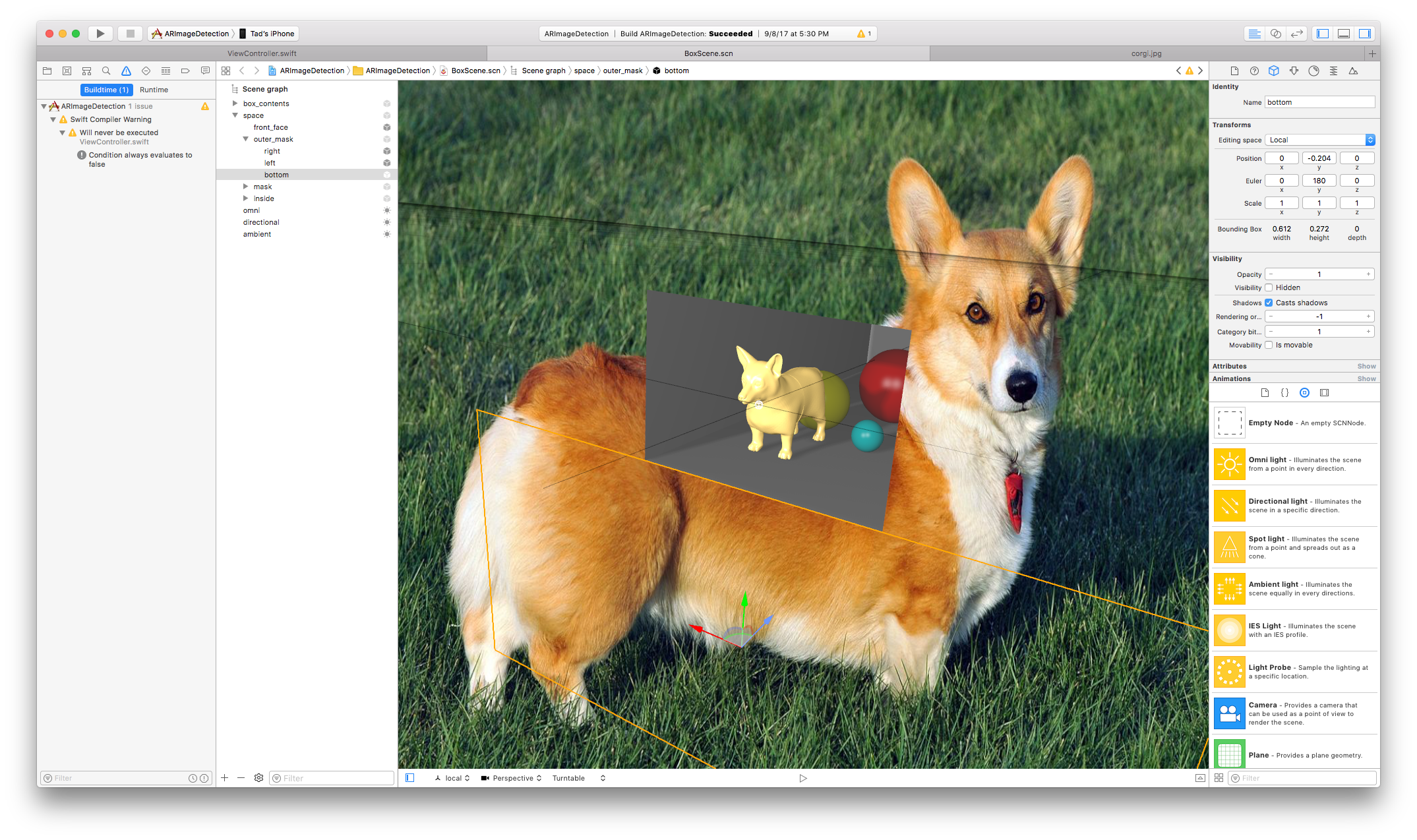

These tests of AR-enabled posters were interesting because ARKit version 1, which was still fairly new at the time, didn’t support vertical wall detection or robust image detection, so both recognizing the poster (using the markings around the edge as a pseudo-barcode) and then placing an anchor-point aligned to it were custom solutions I devised, using rectangle detection to find an anchor point and orientation for the wall.

This video contains flashing or rapidly changing images.

Aside

I needed something to test implementing an image recognition algorithm and vertical wall placement so I printed out this corgi from Wikipedia and taped it to a wall. I spent days in a cycle of writing code, compiling it, walking up to this corgi, and recording tests.

At the end of the project the corgi disappeared mysteriously. A few days later it showed back up on my desk, framed, as a gift from Brian Baker. I still have it.

Bonus Stuff